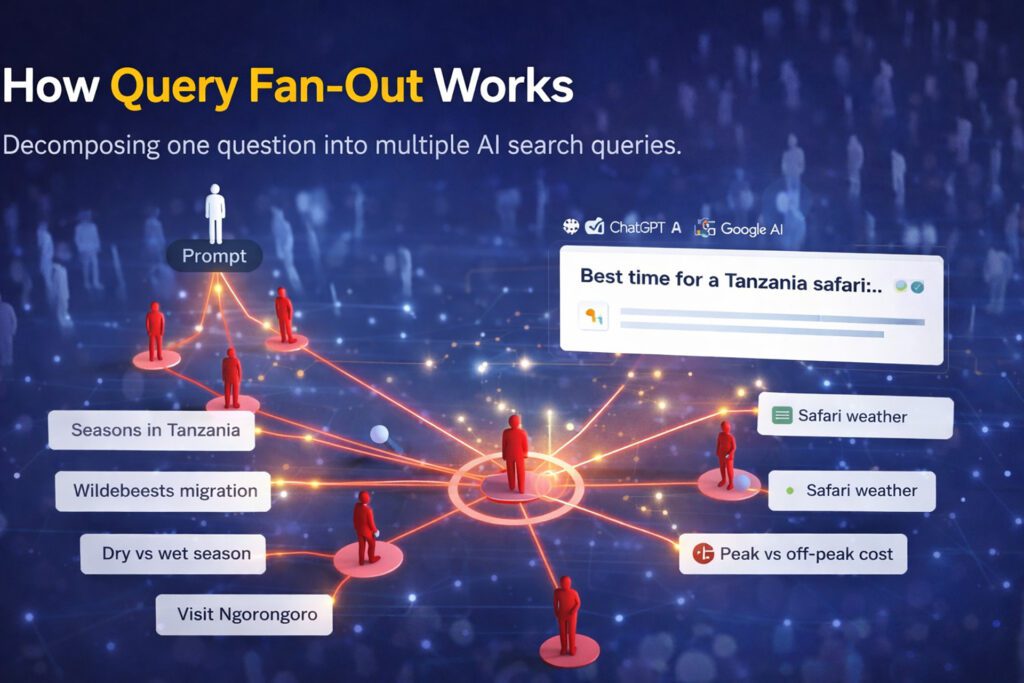

Query fan-out is the process AI models use when someone types a question into Google AI Mode or ChatGPT, and it doesn’t search for what they typed. Not exactly. What actually happens under the hood is more interesting — and more useful for your content strategy than almost anything happening in traditional SEO right now.

Once you understand it, you’ll never look at keyword research the same way again.

What Query Fan-Out Actually Is

Short version: the AI takes one prompt and explodes it into multiple smaller searches.

Someone asks: “What’s the best time to visit Tanzania for a safari?”

The AI doesn’t search that phrase. It generates something closer to this:

- Tanzania safari seasons by month

- Serengeti wildebeest migration timing

- Tanzania dry season vs wet season wildlife viewing

- best time to visit Ngorongoro Crater

- Tanzania safari weather patterns

- peak vs off-peak safari costs Tanzania

Six sub-queries. Maybe more. Each one pulls different sources. The final answer the user reads is a synthesis of everything retrieved across all of them.

Example of a query fan-out:

Google confirmed this publicly at I/O 2025 when Head of Search Elizabeth Reid described AI Mode’s approach: the system “recognizes when a question needs advanced reasoning,” calls on Gemini to “break the question into different subtopics,” and issues “a multitude of queries simultaneously.”

That’s query fan-out. That’s what’s deciding who gets cited and who doesn’t.

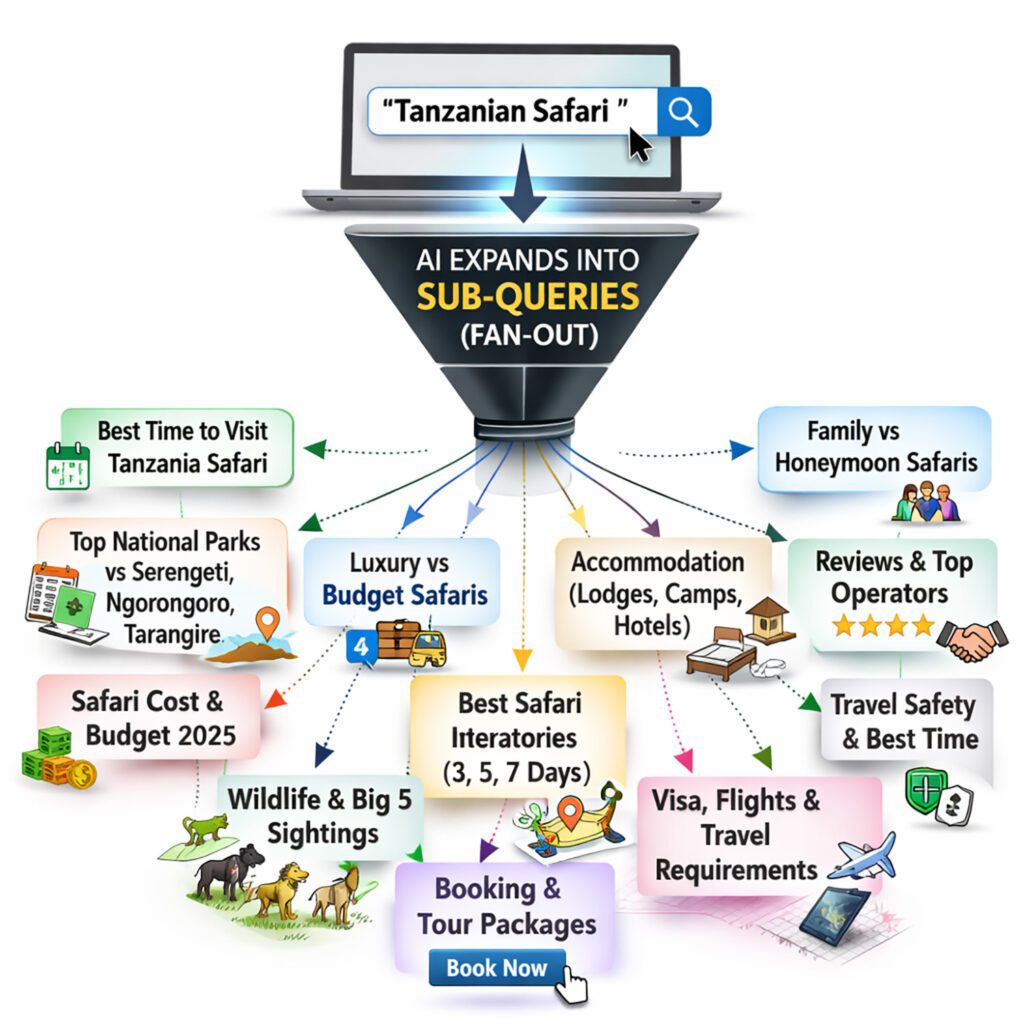

Why This Breaks Traditional Keyword Research

Traditional keyword research is built on one assumption: humans search for things, tools measure volume, you target what people actually type.

Query fan-out breaks that model completely.

| Traditional SEO assumption | What actually happens with AI search |

|---|---|

| Target the keyword people search for | AI generates sub-queries humans never type |

| High volume = high opportunity | Low-volume sub-queries get retrieved constantly |

| One page, one keyword | One prompt triggers 6–12 retrieval searches |

| Rank for the query | Cover the full fan-out or get missed |

The sub-queries AI generates are often hyper-specific. Nobody types “Tanzania dry season wildlife density by region” into a search bar. But an AI answering “best time for Tanzania safari” absolutely searches for something close to that before writing its response.

These are effectively zero-competition keywords. No human targets them. Most SEO tools don’t even register search volume for them. And yet they’re getting retrieved dozens of times a day by AI models pulling together answers for real users with real intent to book, buy, or hire.

This is the low-competition content opportunity that most agencies are sleeping on.

The Reverse-Engineering Process

Okay so how do you actually use this?

Start with the prompt your customer would type. Not a keyword — a full question or sentence the way they’d actually phrase it to an AI. Then decompose it manually by asking: what would an AI need to research to answer this well?

Work through these angles for every prompt:

- Definitional — what does X mean, how does X work

- Comparative — X vs Y, differences between X and Z

- Cost/logistics — how much does X cost, how long does X take

- Timing/context — when is X relevant, who needs X

- Proof/validation — best X providers, X reviews, X awards

- Problem-specific — X for [specific use case or demographic]

For a digital marketing agency targeting Kenyan hospitality businesses, a prompt like “how do I get more bookings for my lodge in Kenya” fans out into things like:

lodge marketing strategies Kenya, direct booking vs OTA commissions East Africa, SEO for safari lodges, Google Business Profile for lodges, content marketing hospitality Kenya, best time to market safari packages…

Most of those have minimal competition. Some have almost none. And they’re all getting searched by AI systems every time someone asks a vague top-level question about lodge marketing.

Does This Mean I Should Create Hundreds of Micro-Pages?

Not necessarily. The smarter play depends on your site’s authority.

If you’re a newer domain: Start with 8-12 comprehensive blog posts that each cover a full topic cluster. Make each post dense enough to answer multiple fan-out sub-queries within one URL. Thin pages on low-authority domains don’t accumulate trust fast enough to matter.

If you have existing authority: You can go more granular. Dedicated pages targeting specific sub-queries start picking up AI retrievals faster because the domain trust is already there to back them up.

The universal rule: Every piece of content needs to be explicit about who it recommends. Getting retrieved is step one. Getting the brand recommendation into the AI’s final response requires content that states a position clearly — not content that just covers a topic neutrally.

Fact Density Is What Closes the Loop

When an AI retrieves a page during fan-out, it’s scanning for extractable facts. Numbers, dates, named entities, verifiable claims, specific outcomes. Content that reads as vague positioning gets used as background context at best. Content packed with specific, citable details gets pulled directly into the response.

A page targeting “cost of SEO for hotels in Kenya” that says “SEO pricing varies depending on your needs” gives the model nothing to work with.

A page that says SEO retainers for hospitality businesses in Kenya typically range from a specific amount per month depending on site size, competition level, and whether technical auditing is included — that gives the model something concrete to extract and relay.

Fact density isn’t about stuffing numbers in. It’s about writing content the AI can actually use as a source rather than just as vague topical context.

What Your Content Calendar Should Look Like After This

Stop building it around keywords with search volume. Build it around prompt clusters instead.

Pick 10 prompts your ideal customer would type into ChatGPT. Decompose each one into its fan-out sub-queries using the six-angle framework above. You’ll typically get 40-70 sub-query targets from 10 source prompts.

Cluster those sub-queries into content pieces. Some become standalone blog posts. Some become sections within a larger guide. Some become FAQ entries on service pages. The goal isn’t one piece per sub-query — it’s coverage of the full fan-out across your content ecosystem.

Then track not whether your keywords rank, but whether your brand gets recommended when those source prompts get run in ChatGPT, Google AI Mode, and Perplexity. That’s the metric that connects query fan-out strategy to actual revenue. If you’re not clear on the difference between getting cited and getting recommended, that distinction is worth understanding first — it changes how you prioritise everything in this list.

The opportunity is significant right now specifically because most competitors haven’t made this shift. They’re still targeting what humans type, not what AIs search for. Those two lists overlap less than anyone in traditional SEO wants to admit.

Nairobi Marketing builds query fan-out content strategies for hospitality operators, tourism companies, and service businesses across East Africa — mapping the actual sub-queries AI models run for your target prompts, identifying the gaps, and building content that gets your brand recommended, not just cited. See how we approach AI SEO here.